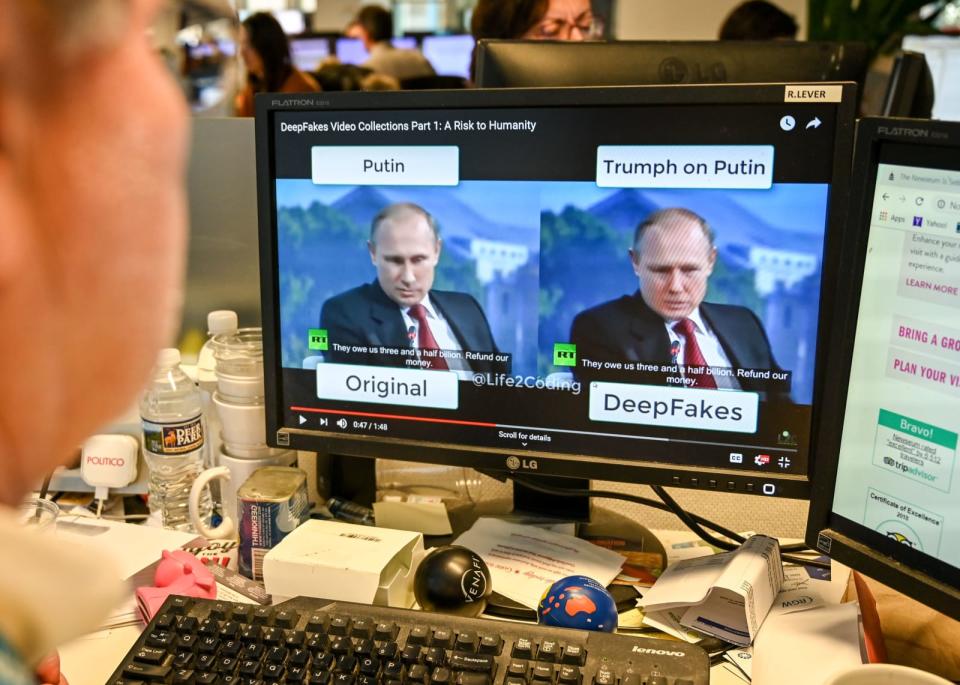

Twitter reveals how it plans to address deepfakes

It wants you to weigh in on draft guidelines for handling 'synthetic and manipulated media.'

Twitter said last month it was working on ways to better to handle deepfakes. It just released draft guidelines on how to address the problem and it's looking for the public to weigh in and help shape policies on what it describes as "synthetic and manipulated media."

Twitter says it'll define "synthetic and manipulated media as any photo, audio, or video that has been significantly altered or fabricated in a way that intends to mislead people or changes its original meaning." It noted such manipulations are often called deepfakes and shallowfakes.

As the guidelines stand, when Twitter discovers deepfakes on its service, it may:

place a notice next to Tweets that share synthetic or manipulated media;

warn people before they share or like Tweets with synthetic or manipulated media; or

add a link -- for example, to a news article or Twitter Moment -- so that people can read more about why various sources believe the media is synthetic or manipulated.

If Twitter determines a tweet that includes a deepfake or shallowfake "could threaten someone's physical safety or lead to other serious harm," it might just remove the tweet entirely. Twitter already banned porn deepfakes last year.

The guidelines aren't yet set in stone, and Twitter's looking for feedback through a survey and tweets with the hashtag #TwitterPolicyFeedback. You'll have until Wednesday, November 27th at 6:59 p.m. ET to weigh in. After reviewing and incorporating feedback, it'll announce a formalized version of the guidelines at least 30 days before they come into force.

Not only can deepfakes be directly damaging to people, they can be used in disinformation campaigns, including ones backed by states. Twitter is one of several major tech companies exploring ways to tackle deepfakes. Amazon, for instance, recently signed up to Facebook's Deepfake Detection Challenge, along with Microsoft, MIT and others. The group is building open-source tools to help governments and organizations identify deepfakes.

Yahoo Finance

Yahoo Finance