New Instagram feature forces angry trolls to take a breath

There’s a common saying: “If you can’t say anything nice, say nothing at all.”

It’s an approach to bullying that doesn’t necessarily fit with modern social media, where engagements, comments and ongoing conversations (and arguments) boost a post’s performance and encourage users to keep coming back.

But when it comes to users’ mental health, this isn’t always a good thing, Instagram has this week acknowledged.

Related story: A Facebook User Is Still Worth Much More Than an Instagram User

Related story: Why this convenience store has 15,000 Instagram followers

In a blog post, head of Instagram Adam Mosseri explained the social media platform will now take an approach to actively prevent bullying behaviour, even if it results in poorer engagement and less comments.

“Online bullying is a complex issue,” he said.

“For years now, we have used artificial intelligence to detect bullying and other types of harmful content in comments, photos and videos. As our community grows, so does our investment in technology. This is especially crucial for teens since they are less likely to report online bullying even when they are the ones who experience it the most.”

The next step is to proactively prevent people from making nasty comments.

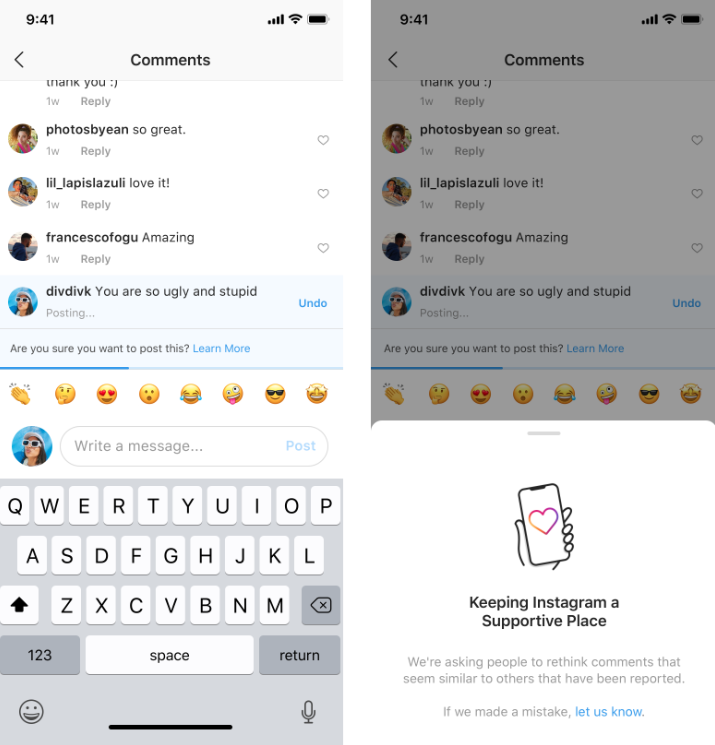

In the last week or so, Instagram has begun rolling out an artificial intelligence-powered feature that warns people that their comment may be considered offensive before they post it.

Mosseri said this tool will help people reflect on their comment before they post it.

“From early tests of this feature, we have found that it encourages some people to undo their comment and share something less hurtful once they have had a chance to reflect,” he said.

Additionally, Instagram is introducing a feature allowing users to restrict comment notifications from certain people and make those comments only visible to the person making them.

As Mosseri explained, many younger users are reluctant to block, report or unfollow a bully as it can actually escalate the situation - particularly if they spend time with their bully in real life, like at high school.

“Soon, we will begin testing a new way to protect your account from unwanted interactions called Restrict. Once you Restrict someone, comments on your posts from that person will only be visible to that person,” he said.

“You can choose to make a restricted person’s comments visible to others by approving their comments. Restricted people won’t be able to see when you’re active on Instagram or when you’ve read their direct messages.”

Instagram happy to sacrifice users in the name of kindness

Speaking to Time, Mosseri said Instagram wants to lead the industry in taking on bullying.

“We will make decisions that mean people use Instagram less if it keeps people more safe,” he said.

Although he expressed concern about overstepping and triggering accusations of restricting free speech, Mosseri said if the platform fails to address cruel behaviour it will mean the brand suffers greater reputational damage over time.

“It could make our partnership relationships more difficult. There are all sorts of ways it could strain us,” Mosseri said.

“If you’re not addressing issues on your platform, I have to believe it’s going to come around and have a real cost.”

Facebook comment sections a headache for Australian publishers

Instagram’s new approach to nasty commenters comes as Australia’s publishers confront a landmark ruling finding that if a Facebook user posts a defamatory comment on a publisher’s story, the publisher is liable.

Instagram’s approach to comment moderation is substantially different to Facebook, despite being owned by the social media giant.

While Instagram allows users to completely block turn off comments, Facebook does not have the same capabilities.

Make your money work with Yahoo Finance’s daily newsletter. Sign up here and stay on top of the latest money, news and tech news.

Yahoo Finance

Yahoo Finance